Compare commits

27 commits

efigueroa-

...

main

| Author | SHA1 | Date | |

|---|---|---|---|

| 84ad4f6402 | |||

| 2c5cc6607f | |||

| fe0a81bb4f | |||

| 621ec7e4f4 | |||

|

|

5f99171e71 | ||

|

|

7d78b96eff | ||

| da421bc296 | |||

|

|

583e73f79b | ||

| c4d5eff4e5 | |||

|

|

39cadd7479 | ||

|

|

2adfc5b384 | ||

|

|

b05e8d41d2 | ||

|

|

f6b3ed839c | ||

|

|

bd833f5fba | ||

|

|

6fa584bdf2 | ||

|

|

f251f666fb | ||

|

|

c154a52545 | ||

|

|

4e5386354f | ||

|

|

00e856d024 | ||

|

|

5a1ec869b2 | ||

|

|

211749a94f | ||

|

|

219064a540 | ||

|

|

3eaef9c717 | ||

|

|

058f4f4f27 | ||

|

|

f87285ac84 | ||

|

|

11f9eac585 | ||

|

|

9a81f674aa |

31 changed files with 1187 additions and 403 deletions

112

.forgejo/workflows/deploy.yml

Normal file

112

.forgejo/workflows/deploy.yml

Normal file

|

|

@ -0,0 +1,112 @@

|

||||||

|

# Forgejo Actions Workflow for Hugo Blog Auto-Deployment

|

||||||

|

#

|

||||||

|

# This workflow automatically builds and deploys your Hugo site when you push to main

|

||||||

|

|

||||||

|

name: Build and Deploy Hugo Site

|

||||||

|

|

||||||

|

on:

|

||||||

|

push:

|

||||||

|

branches:

|

||||||

|

- main

|

||||||

|

workflow_dispatch: # Allow manual triggering

|

||||||

|

|

||||||

|

jobs:

|

||||||

|

deploy:

|

||||||

|

runs-on: docker

|

||||||

|

|

||||||

|

steps:

|

||||||

|

- name: Checkout repository

|

||||||

|

uses: actions/checkout@v4

|

||||||

|

with:

|

||||||

|

submodules: recursive # Required for Hugo themes

|

||||||

|

fetch-depth: 0 # Fetch full history for .GitInfo and .Lastmod

|

||||||

|

|

||||||

|

- name: Generate timestamp

|

||||||

|

id: timestamp

|

||||||

|

run: echo "BUILD_TIME=$(date +%Y%m%d-%H%M%S)" >> $GITHUB_OUTPUT

|

||||||

|

|

||||||

|

- name: Set up Go

|

||||||

|

uses: actions/setup-go@v5

|

||||||

|

with:

|

||||||

|

go-version: '1.24'

|

||||||

|

cache: true

|

||||||

|

|

||||||

|

- name: Install Hugo

|

||||||

|

run: |

|

||||||

|

go install -tags extended github.com/gohugoio/hugo@latest

|

||||||

|

echo "$(go env GOPATH)/bin" >> $GITHUB_PATH

|

||||||

|

|

||||||

|

- name: Verify Hugo installation

|

||||||

|

run: hugo version

|

||||||

|

|

||||||

|

- name: Build Hugo site

|

||||||

|

run: hugo --minify

|

||||||

|

|

||||||

|

- name: Set up SSH

|

||||||

|

run: |

|

||||||

|

mkdir -p ~/.ssh

|

||||||

|

echo "${{ secrets.SSH_PRIVATE_KEY }}" > ~/.ssh/deploy_key

|

||||||

|

chmod 600 ~/.ssh/deploy_key

|

||||||

|

echo "${{ vars.SSH_KNOWN_HOSTS }}" > ~/.ssh/known_hosts

|

||||||

|

|

||||||

|

- name: Create deployment directory

|

||||||

|

env:

|

||||||

|

SSH_HOST: ${{ vars.SSH_HOST }}

|

||||||

|

SSH_USER: ${{ vars.SSH_USER }}

|

||||||

|

SSH_PORT: ${{ vars.SSH_PORT }}

|

||||||

|

DEPLOY_PATH: ${{ vars.DEPLOY_PATH }}

|

||||||

|

BUILD_TIME: ${{ steps.timestamp.outputs.BUILD_TIME }}

|

||||||

|

run: |

|

||||||

|

ssh -i ~/.ssh/deploy_key -p ${SSH_PORT} ${SSH_USER}@${SSH_HOST} \

|

||||||

|

"mkdir -p ${DEPLOY_PATH}-${BUILD_TIME}"

|

||||||

|

|

||||||

|

- name: Deploy via SCP

|

||||||

|

env:

|

||||||

|

SSH_HOST: ${{ vars.SSH_HOST }}

|

||||||

|

SSH_USER: ${{ vars.SSH_USER }}

|

||||||

|

SSH_PORT: ${{ vars.SSH_PORT }}

|

||||||

|

DEPLOY_PATH: ${{ vars.DEPLOY_PATH }}

|

||||||

|

BUILD_TIME: ${{ steps.timestamp.outputs.BUILD_TIME }}

|

||||||

|

run: |

|

||||||

|

scp -i ~/.ssh/deploy_key -P ${SSH_PORT} -r public/* \

|

||||||

|

${SSH_USER}@${SSH_HOST}:${DEPLOY_PATH}-${BUILD_TIME}/

|

||||||

|

|

||||||

|

|

||||||

|

- name: Update symlink

|

||||||

|

env:

|

||||||

|

SSH_HOST: ${{ vars.SSH_HOST }}

|

||||||

|

SSH_USER: ${{ vars.SSH_USER }}

|

||||||

|

SSH_PORT: ${{ vars.SSH_PORT }}

|

||||||

|

DEPLOY_PATH: ${{ vars.DEPLOY_PATH }}

|

||||||

|

BUILD_TIME: ${{ steps.timestamp.outputs.BUILD_TIME }}

|

||||||

|

run: |

|

||||||

|

ssh -i ~/.ssh/deploy_key -p ${SSH_PORT} ${SSH_USER}@${SSH_HOST} \

|

||||||

|

"cd $(dirname ${DEPLOY_PATH}) && ln -sfn public-${BUILD_TIME} public"

|

||||||

|

|

||||||

|

- name: Restart blog container

|

||||||

|

env:

|

||||||

|

SSH_HOST: ${{ vars.SSH_HOST }}

|

||||||

|

SSH_USER: ${{ vars.SSH_USER }}

|

||||||

|

SSH_PORT: ${{ vars.SSH_PORT }}

|

||||||

|

run: |

|

||||||

|

ssh -i ~/.ssh/deploy_key -p ${SSH_PORT} ${SSH_USER}@${SSH_HOST} \

|

||||||

|

"cd /home/eduardo_figueroa/homelab/compose/services/edfigdev-blog && docker compose restart"

|

||||||

|

|

||||||

|

- name: Clean up old deployments

|

||||||

|

env:

|

||||||

|

SSH_HOST: ${{ vars.SSH_HOST }}

|

||||||

|

SSH_USER: ${{ vars.SSH_USER }}

|

||||||

|

SSH_PORT: ${{ vars.SSH_PORT }}

|

||||||

|

DEPLOY_PATH: ${{ vars.DEPLOY_PATH }}

|

||||||

|

run: |

|

||||||

|

ssh -i ~/.ssh/deploy_key -p ${SSH_PORT} ${SSH_USER}@${SSH_HOST} \

|

||||||

|

"cd $(dirname ${DEPLOY_PATH}) && ls -dt public-* | tail -n +6 | xargs rm -rf"

|

||||||

|

continue-on-error: true # Don't fail if cleanup fails

|

||||||

|

|

||||||

|

- name: Deployment summary

|

||||||

|

run: |

|

||||||

|

echo "✅ Hugo site deployed successfully!"

|

||||||

|

echo "Build time: ${{ steps.timestamp.outputs.BUILD_TIME }}"

|

||||||

|

echo "Deployed to: ${{ vars.SSH_HOST }}"

|

||||||

|

|

||||||

|

|

||||||

79

.github/workflows/build.yml

vendored

79

.github/workflows/build.yml

vendored

|

|

@ -1,79 +0,0 @@

|

||||||

# Sample workflow for building and deploying a Hugo site to GitHub Pages

|

|

||||||

name: Deploy Hugo site to Pages

|

|

||||||

|

|

||||||

on:

|

|

||||||

# Runs on pushes targeting the default branch

|

|

||||||

push:

|

|

||||||

branches:

|

|

||||||

- main

|

|

||||||

|

|

||||||

# Allows you to run this workflow manually from the Actions tab

|

|

||||||

workflow_dispatch:

|

|

||||||

|

|

||||||

# Sets permissions of the GITHUB_TOKEN to allow deployment to GitHub Pages

|

|

||||||

permissions:

|

|

||||||

contents: read

|

|

||||||

pages: write

|

|

||||||

id-token: write

|

|

||||||

|

|

||||||

# Allow only one concurrent deployment, skipping runs queued between the run in-progress and latest queued.

|

|

||||||

# However, do NOT cancel in-progress runs as we want to allow these production deployments to complete.

|

|

||||||

concurrency:

|

|

||||||

group: "pages"

|

|

||||||

cancel-in-progress: false

|

|

||||||

|

|

||||||

# Default to bash

|

|

||||||

defaults:

|

|

||||||

run:

|

|

||||||

shell: bash

|

|

||||||

|

|

||||||

jobs:

|

|

||||||

# Build job

|

|

||||||

build:

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

env:

|

|

||||||

HUGO_VERSION: 0.128.0

|

|

||||||

steps:

|

|

||||||

- name: Install Hugo CLI

|

|

||||||

run: |

|

|

||||||

wget -O ${{ runner.temp }}/hugo.deb https://github.com/gohugoio/hugo/releases/download/v${HUGO_VERSION}/hugo_extended_${HUGO_VERSION}_linux-amd64.deb \

|

|

||||||

&& sudo dpkg -i ${{ runner.temp }}/hugo.deb

|

|

||||||

- name: Install Dart Sass

|

|

||||||

run: sudo snap install dart-sass

|

|

||||||

- name: Checkout

|

|

||||||

uses: actions/checkout@v4

|

|

||||||

with:

|

|

||||||

submodules: recursive

|

|

||||||

fetch-depth: 0

|

|

||||||

- name: Setup Pages

|

|

||||||

id: pages

|

|

||||||

uses: actions/configure-pages@v5

|

|

||||||

- name: Install Node.js dependencies

|

|

||||||

run: "[[ -f package-lock.json || -f npm-shrinkwrap.json ]] && npm ci || true"

|

|

||||||

- name: Build with Hugo

|

|

||||||

env:

|

|

||||||

HUGO_CACHEDIR: ${{ runner.temp }}/hugo_cache

|

|

||||||

HUGO_ENVIRONMENT: production

|

|

||||||

TZ: America/Los_Angeles

|

|

||||||

run: |

|

|

||||||

hugo \

|

|

||||||

--gc \

|

|

||||||

--minify \

|

|

||||||

--baseURL "${{ steps.pages.outputs.base_url }}/"

|

|

||||||

- name: Upload artifact

|

|

||||||

uses: actions/upload-pages-artifact@v3

|

|

||||||

with:

|

|

||||||

path: ./public

|

|

||||||

|

|

||||||

# Deployment job

|

|

||||||

deploy:

|

|

||||||

environment:

|

|

||||||

name: github-pages

|

|

||||||

url: ${{ steps.deployment.outputs.page_url }}

|

|

||||||

runs-on: ubuntu-latest

|

|

||||||

needs: build

|

|

||||||

steps:

|

|

||||||

- name: Deploy to GitHub Pages

|

|

||||||

id: deployment

|

|

||||||

uses: actions/deploy-pages@v4

|

|

||||||

|

|

||||||

3

.gitmodules

vendored

3

.gitmodules

vendored

|

|

@ -1,3 +1,6 @@

|

||||||

[submodule "themes/PaperMod"]

|

[submodule "themes/PaperMod"]

|

||||||

path = themes/PaperMod

|

path = themes/PaperMod

|

||||||

url = https://github.com/adityatelange/hugo-PaperMod.git

|

url = https://github.com/adityatelange/hugo-PaperMod.git

|

||||||

|

[submodule "themes/hugo-book"]

|

||||||

|

path = themes/hugo-book

|

||||||

|

url = https://github.com/alex-shpak/hugo-book

|

||||||

|

|

|

||||||

|

|

@ -1,4 +1,4 @@

|

||||||

[](https://github.com/efigueroa/figsystems/actions/workflows/build.yml)

|

[](https://github.com/efigueroa/figsystems/actions/workflows/build.yml)

|

||||||

|

|

||||||

|

|

||||||

Just my website hosted at https://fig.systems

|

Just my website hosted at https://edfig.dev

|

||||||

|

|

|

||||||

18

content/_index.md

Normal file

18

content/_index.md

Normal file

|

|

@ -0,0 +1,18 @@

|

||||||

|

---

|

||||||

|

title: EdFig.dev

|

||||||

|

---

|

||||||

|

|

||||||

|

# <u>**Ed**</u>uardo <u>**Fig**</u>ueroa

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

---

|

||||||

|

Self hosted blog for Eduardo Figueroa. S

|

||||||

|

|

||||||

|

Subjects include

|

||||||

|

- Musings

|

||||||

|

- DIY projects

|

||||||

|

- linux

|

||||||

|

- IAC

|

||||||

|

- and other things.

|

||||||

5

content/docs/_index.md

Normal file

5

content/docs/_index.md

Normal file

|

|

@ -0,0 +1,5 @@

|

||||||

|

---

|

||||||

|

title: Posts

|

||||||

|

bookFlatSection: true

|

||||||

|

weight: 1

|

||||||

|

---

|

||||||

55

content/docs/posts/FFB.md

Normal file

55

content/docs/posts/FFB.md

Normal file

|

|

@ -0,0 +1,55 @@

|

||||||

|

---

|

||||||

|

title: "Fantasy Football Draft Tools"

|

||||||

|

date: "2024-08-29"

|

||||||

|

tags:

|

||||||

|

- reference

|

||||||

|

- draft

|

||||||

|

categories:

|

||||||

|

- fantasy-football

|

||||||

|

---

|

||||||

|

|

||||||

|

---

|

||||||

|

# Draft Tools

|

||||||

|

---

|

||||||

|

|

||||||

|

### > Beer Sheets

|

||||||

|

|

||||||

|

I used to recommend and use [Beer Sheets](https://footballabsurdity.com/draft-sheet-form/), a tool I found online that gave good results every time I used it. I've gotten good feedback from people I've recommended it to. The original creator has moved on after the 2023 season and the new team has yet to prove they're as good.

|

||||||

|

|

||||||

|

#### Usage

|

||||||

|

|

||||||

|

You can go to their main page and just copy over the settings from your league. For example here's a [direct link](https://footballabsurdity.com/draft-sheet-form/?teams=14&bn=5&qb=1&rb=2&wr=2&rwt=2&patd=6&rutd=6&retd=6&payd=0.04&ruyd=0.1&reyd=0.1&int=-1.0&rec=0.5&fum=-2.0) to the settings my family league is using.

|

||||||

|

|

||||||

|

There doesn't seem to be support for PPFD (point per first down) so I just kept it at 1/2 ppr.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

# Season Long Tools, Podcasts, Youtube

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Tools

|

||||||

|

|

||||||

|

### > Fantasy Pros

|

||||||

|

|

||||||

|

[FantasyPros](https://www.fantasypros.com/). I've used both the free and paid versions. The free version is all you need while the paid tools offer quality-of-life (aka just figure it out for me) tools for trading and waiver wire pick ups.

|

||||||

|

|

||||||

|

The paid version does offer syncing multiple leagues but I just use [multiple throwaway accounts](/posts/domainandemail) to bypass the limit.

|

||||||

|

|

||||||

|

## Podcasts and Youtube

|

||||||

|

|

||||||

|

### > RotoBaller

|

||||||

|

|

||||||

|

[RotoBaller](https://www.rotoballer.com/nfl). Good articles for waiver, start/sit, and latest news on players.

|

||||||

|

|

||||||

|

### > Fantasy Footballers

|

||||||

|

|

||||||

|

[Fantasy Footballers](https://www.youtube.com/thefantasyfootballers) have a website, tools, podcast and youtube channel. I prefer the youtube channel, it's great for background listening as they're entertaining and long enough for doing stuff around the house.

|

||||||

|

|

||||||

|

### > Late Round with JJ Zachariazon

|

||||||

|

|

||||||

|

[LateRound](https://lateround.com/#newsletter) is a podcast that covers basically the same content as the newsletter. Newsletter will usually have +1 bit of info to encourage getting it, e.g podcast covers 10 Waiver Wire Pickups while newsletter has 11. Data backed and pretty accurate.

|

||||||

|

|

||||||

|

### > Rams Brothers

|

||||||

|

|

||||||

|

[Rams Brothers](https://www.youtube.com/channel/UCOfbL0Rk-DwcNPy-zFvy8nA) Podcast and youtube channel. LA Rams talk.

|

||||||

5

content/docs/posts/_index.md

Normal file

5

content/docs/posts/_index.md

Normal file

|

|

@ -0,0 +1,5 @@

|

||||||

|

---

|

||||||

|

title: Posts

|

||||||

|

bookCollapseSection: true

|

||||||

|

weight: 1

|

||||||

|

---

|

||||||

34

content/docs/posts/bashrcoops.md

Normal file

34

content/docs/posts/bashrcoops.md

Normal file

|

|

@ -0,0 +1,34 @@

|

||||||

|

---

|

||||||

|

title: "Locking yourself out of SDDM with .bashrc"

|

||||||

|

date: "2026-03-01"

|

||||||

|

tags:

|

||||||

|

- linux

|

||||||

|

- rice

|

||||||

|

categories:

|

||||||

|

- linux

|

||||||

|

- til

|

||||||

|

---

|

||||||

|

# Transferring

|

||||||

|

|

||||||

|

I'm going to [SCaLEx23](https://www.socallinuxexpo.org/scale/23x). I don't tend to use my laptop as a primary device so I was transferring *everything*.

|

||||||

|

|

||||||

|

I literally can't live without my aliases and shortcuts so in my rush I somehow moved a copy of my `.bashrc` into `~/.bashrc.d`. Which doesn't sound like a big deal except I have this in my main file.

|

||||||

|

|

||||||

|

```bash

|

||||||

|

if [ -d ~/.bashrc.d ]; then

|

||||||

|

for rc in ~/.bashrc.d/*.sh; do

|

||||||

|

if [ -f "$rc" ]; then

|

||||||

|

. "$rc"

|

||||||

|

fi

|

||||||

|

done

|

||||||

|

fi

|

||||||

|

```

|

||||||

|

|

||||||

|

Why load everything in? Because I'm lazy.

|

||||||

|

|

||||||

|

I find out on next login that I'm stuck in a login-loop. Getting in through TTY3 check the thousands of SDDM error crashing messages in `journalctl` and remove the rogue file.

|

||||||

|

|

||||||

|

# The Fix

|

||||||

|

|

||||||

|

I've since moved everything to only loop over items ending in `.sh` to avoid this unwanted files being sourced.

|

||||||

|

|

||||||

|

|

@ -4,11 +4,16 @@ description: "If you need to reach me."

|

||||||

date: "2019-02-28"

|

date: "2019-02-28"

|

||||||

---

|

---

|

||||||

|

|

||||||

***

|

---

|

||||||

### E-mail

|

|

||||||

Eduardo_Figueroa@fig.systems

|

### E-mail

|

||||||

***

|

|

||||||

|

[Eddie@edfig.dev](mailto:Eddie@edfig.dev)

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

### Seldom used Socials

|

### Seldom used Socials

|

||||||

|

|

||||||

[@edfig@mastodon.social](https://mastodon.social/@edfig)

|

[@edfig@mastodon.social](https://mastodon.social/@edfig)

|

||||||

|

|

||||||

[@edfig.bsky.social](https://bsky.app/profile/edfig.bsky.social)

|

[@edfig.dev](https://bsky.app/profile/edfig.dev)

|

||||||

57

content/docs/posts/dnd-first-timeDM.md

Normal file

57

content/docs/posts/dnd-first-timeDM.md

Normal file

|

|

@ -0,0 +1,57 @@

|

||||||

|

---

|

||||||

|

title: "DND One Shot Recommendations"

|

||||||

|

date: "2026-03-19"

|

||||||

|

tags:

|

||||||

|

- ttrpg

|

||||||

|

- dnd

|

||||||

|

categories:

|

||||||

|

- games

|

||||||

|

---

|

||||||

|

|

||||||

|

# DND One Shot Recommendations

|

||||||

|

|

||||||

|

Some Recs and Resources. It's come up a few times so I made a page to quickly share with others.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Death House

|

||||||

|

|

||||||

|

Horror themed, prequel to Curse of Strahd, [Death House](https://media.wizards.com/2016/downloads/DND/Curse%20of%20Strahd%20Introductory%20Adventure.pdf) is meant for 3rd to 5th level players. I've never ran this. Only tip I know is to not let anyone 1v1 the broom lol.

|

||||||

|

|

||||||

|

**Review**

|

||||||

|

TBD :(

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## Study in Marble

|

||||||

|

|

||||||

|

From the [DMSguild.com page for it](https://www.dmsguild.com/en/product/408152/a-study-in-marble). Also was announced [via reddit](https://www.reddit.com/r/DnDBehindTheScreen/comments/x0t5am/a_study_in_marble_a_mystery_oneshot_based_on/) and they have some tips and suggestions.

|

||||||

|

|

||||||

|

> The richest man on the isle of Syklos has suddenly gone missing. He's been known to have some extramarital excursions in the past, but this time is different—he hasn't come back. Why did he disappear? Who is responsible? And what does the eccentric local sculptor have to do with it?

|

||||||

|

A Study in Marble is an adventure for a 3rd to 5th level party inspired by ancient Greek mythology. Players will unravel a mystery in an urban environment, with significant emphasis on social interaction and gathering clues; the module can be played as a one-shot or integrated into an existing campaign as a side quest. The adventure is especially suitable for campaigns set in the world of Theros, but can fit into any campaign with room for a Greek city.

|

||||||

|

|

||||||

|

This module includes:

|

||||||

|

- 3 maps, in both PDF and JPG format

|

||||||

|

- 4 possible endings, depending on the choices made by the party

|

||||||

|

- 1 central plot twist that will keep your players guessing

|

||||||

|

|

||||||

|

**Review**:

|

||||||

|

|

||||||

|

Roleplay heavy, fun to run.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

## A Wild Sheep Chase

|

||||||

|

|

||||||

|

> A Single-Session Adventure for parties of 4th-5th level

|

||||||

|

The very first adventure produced by Winghorn Press, freshly updated with player feedback and suggestions,

|

||||||

|

as well as a brand new map. When the party's attempt to grab a rare afternoon of downtime is interrupted by a frantic sheep equipped with a

|

||||||

|

Scroll of Speak to Animals, they're dragged into a magical grudge match that will test their strength, courage and willingness to endure baa'd puns.

|

||||||

|

|

||||||

|

> Will our heroes be able to overcome a band of transmuted assassins and an extremely bitter apprentice packing dangerously unstable magic items? There’s only one way to find out.

|

||||||

|

|

||||||

|

[Free download here from their site](https://winghornpress.com/adventures/a-wild-sheep-chase/)

|

||||||

|

|

||||||

|

**Review**:

|

||||||

|

|

||||||

|

Can either be roleplay or combat driven. Good for new players or new DMs. I've got some mini's for this one if you'd like to use them.

|

||||||

94

content/docs/posts/domainandemail.md

Normal file

94

content/docs/posts/domainandemail.md

Normal file

|

|

@ -0,0 +1,94 @@

|

||||||

|

---

|

||||||

|

title: "Custom Domain and Emails"

|

||||||

|

date: "2024-10-07"

|

||||||

|

tags:

|

||||||

|

- dns

|

||||||

|

- email

|

||||||

|

categories:

|

||||||

|

- selfhosted

|

||||||

|

---

|

||||||

|

|

||||||

|

# What is this?

|

||||||

|

|

||||||

|

Let's say you wanted to buy a domain like `edfig.dev`. You can host a personal blog at this address. Once you buy the domain, not only can you host content, but with a bit more tinkering you can send and receive emails with it.

|

||||||

|

|

||||||

|

You can email `eddie@edfig.dev` or `admin@edfig.dev` and that email will make its way to my inbox. You can set up rules to handle specific addresses too.

|

||||||

|

|

||||||

|

You'll need to create accounts for the following:

|

||||||

|

- Mailgun.com

|

||||||

|

- porkbun.com

|

||||||

|

|

||||||

|

# Setting it up

|

||||||

|

|

||||||

|

## 1. The Domain

|

||||||

|

|

||||||

|

You can buy a domain from any registrar, I recommend [PorkBun](https://porkbun.com/) or [Cloudflare](https://www.cloudflare.com/products/registrar/). I'll be using Porkbun for this discussion.

|

||||||

|

|

||||||

|

Pricing will depend on the name and what TLD (the `.com` part). I occasionally run into issues with sites not recognizing `eddie@fig.systems` as a valid email address because it's a lesser known domain.

|

||||||

|

|

||||||

|

Once you have it you can enter DNS records to where you host stuff or start using it for email.

|

||||||

|

|

||||||

|

## 2. The Email

|

||||||

|

|

||||||

|

Email is one of those things you shouldn't host yourself, it's very annoying. But luckily there are services out there that take care of most of the hassle. [MailGun](https://www.mailgun.com/) and [SendGrid](https://sendgrid.com/en-us) are two such services. I'll be using Mailgun here.

|

||||||

|

|

||||||

|

With Mailgun I can:

|

||||||

|

- Receive emails at custom email addresses with my domain

|

||||||

|

- Route those emails based on rules

|

||||||

|

- e.g. Emails sent to `no-reply@edfig.dev` are completely dropped

|

||||||

|

- Send emails AS those email addresses through gmail

|

||||||

|

- Receive an email at `admin@edfig.dev` at my regular gmail account and reply as `admin@fig.systems`

|

||||||

|

- Use their API to programmatically send emails

|

||||||

|

- Use their SMTP servers to send as custom email addresses

|

||||||

|

- My self hosted services send notification emails as `no-reply@fig.systems` or as `service_name@fig.systems`

|

||||||

|

|

||||||

|

## 3. Setting up DNS

|

||||||

|

|

||||||

|

1. Buy a domain at porkbun.

|

||||||

|

|

||||||

|

Pick your favorite. I'll be using `figgy.foo` for this, there was a good deal on it.

|

||||||

|

|

||||||

|

2. Log into Mailgun

|

||||||

|

- Go to Send -> Sending -> Domains

|

||||||

|

- Click on "Add New Domain"

|

||||||

|

- Add `figgy.foo`, leave the rest blank, click Add Domain

|

||||||

|

|

||||||

|

3. Add DNS records to porkbun.

|

||||||

|

|

||||||

|

You'll be provided with records for sending, receiving, and tracking.

|

||||||

|

|

||||||

|

In Porkbun Domain Management select `DNS` when you hover over your new domain.

|

||||||

|

|

||||||

|

Copy the entries over. Make sure the Types match and that you leave off the `figgy.foo` portion in the `host` field in porkbun. Anything you add in the host field will automatically append your domain to the end of it. If the field is just `figgy.foo` then leave the host field blank.

|

||||||

|

|

||||||

|

Copy all the Value fields from Mailgun to the Answer field in Porkbun and then click on Verify at the top right. You should see the status change to Active.

|

||||||

|

|

||||||

|

This is what your records in porkbun should look like.

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## 4. Setting up Mailgun

|

||||||

|

|

||||||

|

### Routing emails.

|

||||||

|

|

||||||

|

1. Go to Send -> Receiving and Create a Route.

|

||||||

|

|

||||||

|

2. Expression Type -> Match Recipient

|

||||||

|

|

||||||

|

- Enter `admin@figgy.foo`

|

||||||

|

|

||||||

|

3. Enable Forward and fill in your personal address. For me that'd be my normal gmail address.

|

||||||

|

|

||||||

|

4. Set priority to 50 so you have space to add future routes before or after this route.

|

||||||

|

5. Add a simple description like "send to gmail" and Create the Route.

|

||||||

|

|

||||||

|

At the free tier you can only have 5 routes total. I only use the following:

|

||||||

|

|

||||||

|

1. Match `no-reply@figgy.foo`, Store and Notify and Stop processing.

|

||||||

|

2. Match `family@figgy.foo`, forward that email to multiple family members.

|

||||||

|

- Useful for events and family plans.

|

||||||

|

3. Match `Kindle@figgy.foo`, forward to my custom [Amazon provided](https://www.amazon.com/sendtokindle/email) kindle email address for sending epubs/pdfs.

|

||||||

|

- much friendlier address than what they make for you.

|

||||||

|

4. A catch all final route that just forwards to my personal address.

|

||||||

|

|

||||||

|

Number 4 is where most of the magic and utility of setting all this up happens. I can give out unlimited custom email addresses and I'll know who sent them by the address. That is, if I give out `businessName@fig.systems` I can later use that in gmail to filter, block, or search for anything related to that business. I can even see who sold my info if I start getting spam from that address.

|

||||||

46

content/docs/posts/eza_ls_git.md

Normal file

46

content/docs/posts/eza_ls_git.md

Normal file

|

|

@ -0,0 +1,46 @@

|

||||||

|

---

|

||||||

|

title: "Rice"

|

||||||

|

description: "Making Linux Cool - Dynamically"

|

||||||

|

date: "2026-02-24"

|

||||||

|

tags:

|

||||||

|

- rice

|

||||||

|

- bash

|

||||||

|

categories:

|

||||||

|

- linux

|

||||||

|

---

|

||||||

|

I like the fun linux commands that rice out your setup but I don't like having to remember when/how to use them.

|

||||||

|

|

||||||

|

I've got my `.bashrc` that calls everything in `.bashrc.d/*`. In there I've started throwing in files with custom config options.

|

||||||

|

|

||||||

|

Here's a quick ls function that's context aware. I'll be expanding it as I learn better ways to use eza or similar listing tools.

|

||||||

|

|

||||||

|

```bash

|

||||||

|

alias ls='_ls'

|

||||||

|

# ls: use eza with git info inside git repos, plain ls elsewhere

|

||||||

|

_ls() {

|

||||||

|

if git rev-parse --is-inside-work-tree &>/dev/null; then

|

||||||

|

eza -l -h --git --git-repos --total-size --no-user --git-ignore --icons "$@"

|

||||||

|

else

|

||||||

|

command ls "$@"

|

||||||

|

fi

|

||||||

|

}

|

||||||

|

|

||||||

|

```

|

||||||

|

|

||||||

|

Turns this

|

||||||

|

```bash

|

||||||

|

figsystems on main

|

||||||

|

11:48 ❯ /usr/bin/ls

|

||||||

|

archetypes content hugo.yaml README.md themes

|

||||||

|

```

|

||||||

|

to this

|

||||||

|

```bash

|

||||||

|

figsystems on main

|

||||||

|

11:48 ❯ ls

|

||||||

|

Permissions Size Date Modified Git Git Repo Name

|

||||||

|

drwxr-xr-x@ 1.1k 5 Feb 14:18 -- - - archetypes

|

||||||

|

drwxr-xr-x@ 70k 5 Feb 14:18 -- - - content

|

||||||

|

.rw-r--r--@ 3.4k 5 Feb 15:57 -- - - hugo.yaml

|

||||||

|

.rw-r--r--@ 237 5 Feb 14:18 -- - - README.md

|

||||||

|

drwxr-xr-x@ 0 5 Feb 14:18 -- - - themes

|

||||||

|

```

|

||||||

82

content/docs/posts/index_oops.md

Normal file

82

content/docs/posts/index_oops.md

Normal file

|

|

@ -0,0 +1,82 @@

|

||||||

|

---

|

||||||

|

title: "Index out of range in terrafrom"

|

||||||

|

date: "2026-03-26"

|

||||||

|

tags:

|

||||||

|

- terraform

|

||||||

|

- oops

|

||||||

|

categories:

|

||||||

|

- iac

|

||||||

|

---

|

||||||

|

|

||||||

|

Accidently nuked half of some resources and broke DNS (yes, it is in fact always DNS). One of the first things I learned and is on a lot of guides for terraform is how `count` works. It's one of the meta-arguments you can use with most resources, others are

|

||||||

|

|

||||||

|

```

|

||||||

|

depends_on

|

||||||

|

count

|

||||||

|

for_each

|

||||||

|

provider / providers

|

||||||

|

lifecycle

|

||||||

|

```

|

||||||

|

|

||||||

|

Here's an example for count before I show my oops.

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

variable "names" {

|

||||||

|

default = ["alice", "bob", "carol"]

|

||||||

|

}

|

||||||

|

|

||||||

|

resource "aws_instance" "web" {

|

||||||

|

count = length(var.names)

|

||||||

|

ami = "ami-0c55b159cbfafe1f0"

|

||||||

|

instance_type = "t3.micro"

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = var.names[count.index]

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

Remove `"bob"` → `default = ["alice", "carol"]`. You'll see this in your terraform run of web[1] transitioning.

|

||||||

|

|

||||||

|

```

|

||||||

|

~ Name = "bob" -> "carol"

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

carol shifts from index 2 → 1, so Terraform modifies the bob instance to become carol, and destroys the old carol. Two changes instead of one.

|

||||||

|

|

||||||

|

`count` is very quick and easy to use but honestly I avoided it. If I read of a feature that has to be used with extra considerations, I'd rather use the pattern that doesn't let me screw up if my coffee has fully kicked in.

|

||||||

|

|

||||||

|

---

|

||||||

|

|

||||||

|

In my case, I added to middle of an array in a variable but the implementation logic was a count instead of a `for_each`.

|

||||||

|

|

||||||

|

Here's an implementation that guarentess uniqness in the array to avoid collisions and doesn't care about order.

|

||||||

|

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

# Before (vulnerable — index shuffle on any list change)

|

||||||

|

resource "aws_instance" "web" {

|

||||||

|

count = length(var.names)

|

||||||

|

ami = "ami-0c55b159cbfafe1f0"

|

||||||

|

instance_type = "t3.micro"

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = var.names[count.index]

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# After (safe — each instance is an independent resource)

|

||||||

|

resource "aws_instance" "web" {

|

||||||

|

for_each = toset(var.instances)

|

||||||

|

ami = "ami-0c55b159cbfafe1f0"

|

||||||

|

instance_type = "t3.micro"

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = each.key

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

Even better is that with the first method you access the resource like `aws_instance.web[0]` but with the 2nd you get a much more descriptive and assuring `aws_instance.web["bob"]`

|

||||||

|

|

||||||

24

content/docs/posts/rosterhash.md

Normal file

24

content/docs/posts/rosterhash.md

Normal file

|

|

@ -0,0 +1,24 @@

|

||||||

|

---

|

||||||

|

title: "RosterHash: fantasy football schedule viewer"

|

||||||

|

date: "2024-11-20"

|

||||||

|

tags:

|

||||||

|

- fantasy-football

|

||||||

|

- selfhosted

|

||||||

|

categories:

|

||||||

|

- projects

|

||||||

|

---

|

||||||

|

|

||||||

|

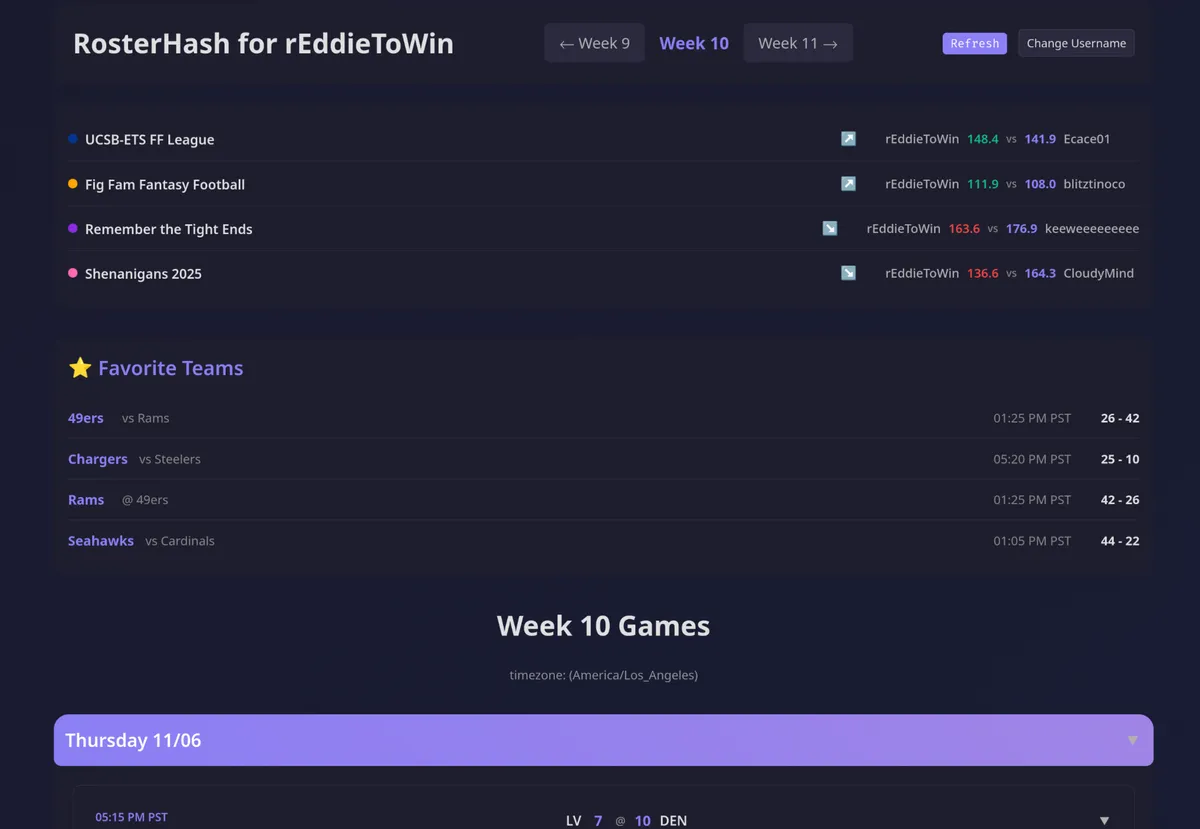

I joined another league this year.

|

||||||

|

|

||||||

|

I was losing track of who played when and what league.

|

||||||

|

|

||||||

|

So I made ~~GameTime (nope, that's taken)~~ [RosterHash](https://rosterhash.edfig.dev)!

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

Enter your Sleeper username and away you go.

|

||||||

|

|

||||||

|

Features:

|

||||||

|

- Can save up to 4 favorite teams for checking when they play and the score

|

||||||

|

- Shows your players per league per game and color codes it all.

|

||||||

|

- Completed games auto-collapse and get out of the way

|

||||||

116

content/docs/posts/rr-rss-nag.md

Normal file

116

content/docs/posts/rr-rss-nag.md

Normal file

|

|

@ -0,0 +1,116 @@

|

||||||

|

---

|

||||||

|

title: "Bypassing RoyalRoad's piracy nags in RSS Feeds"

|

||||||

|

date: "2024-11-20"

|

||||||

|

tags:

|

||||||

|

- rss

|

||||||

|

- freshrss

|

||||||

|

categories:

|

||||||

|

- selfhosted

|

||||||

|

---

|

||||||

|

# Issue

|

||||||

|

|

||||||

|

[Royal Road](https://www.royalroad.com/home) likes to annoy pirates. This is (arguably) good.

|

||||||

|

|

||||||

|

Royal Road doesn't care if they annoy RSS users. This is **bad**.

|

||||||

|

|

||||||

|

Here's a walkthrough of the problem and the fix.

|

||||||

|

|

||||||

|

### The Problem:

|

||||||

|

|

||||||

|

First, let's look at the full picture of why this is happening.

|

||||||

|

|

||||||

|

The Original Website HTML (Simplified)

|

||||||

|

|

||||||

|

When you visit the Royal Road chapter in your browser, the full page's HTML looks something like this. Your browser loads the <head> section and the <body> section.

|

||||||

|

```HTML

|

||||||

|

|

||||||

|

<html>

|

||||||

|

<head>

|

||||||

|

<style>

|

||||||

|

.cjZhYjNmYjZkZmFjZTQ2YTk4OWQwYjRiMjRjZDQyOGRl {

|

||||||

|

display: none;

|

||||||

|

}

|

||||||

|

</style>

|

||||||

|

</head>

|

||||||

|

|

||||||

|

...

|

||||||

|

|

||||||

|

<body>

|

||||||

|

<div class="chapter-content">

|

||||||

|

|

||||||

|

<p class="cnMxYzY0ZjllNmVj...">

|

||||||

|

<span style="font-weight: 400">Nathan got the message...</span>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

<p class="cnNiYTMwZmE4YjE2..."> </p>

|

||||||

|

|

||||||

|

<p class="cnNiOWQ0MDU1MDA2...">

|

||||||

|

<span style="font-weight: 400">Sarya waved her hand...</span>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

<p class="cnM0NjAwNWU4Y2Vl..."> </p>

|

||||||

|

|

||||||

|

<span class="cjZhYjNmYjZkZmFjZTQ2YTk4OWQwYjRiMjRjZDQyOGRl">

|

||||||

|

<br>The narrative has been stolen; if detected on Amazon, report...<br>

|

||||||

|

</span>

|

||||||

|

|

||||||

|

</div>

|

||||||

|

|

||||||

|

</body>

|

||||||

|

</html>

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

On the live website, your browser reads the `<style>` tag in the `<head>` and knows to hide the spam `<span>`. You never see it.

|

||||||

|

|

||||||

|

### What FreshRSS Sees (The Problem)

|

||||||

|

|

||||||

|

I've told FreshRSS to only grab the content from `.chapter-content` which is the actual content of a post. So, FreshRSS requests the page and then scrapes only this part:

|

||||||

|

|

||||||

|

```html

|

||||||

|

|

||||||

|

<p class="cnMxYzY0ZjllNmVj...">

|

||||||

|

<span style="font-weight: 400">Nathan got the message...</span>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

<p class="cnNiYTMwZmE4YjE2..."> </p>

|

||||||

|

|

||||||

|

<p class="cnNiOWQ0MDU1MDA2...">

|

||||||

|

<span style="font-weight: 400">Sarya waved her hand...</span>

|

||||||

|

</p>

|

||||||

|

|

||||||

|

<p class="cnM0NjAwNWU4Y2Vl..."> </p>

|

||||||

|

|

||||||

|

<span class="cjZhYjNmYjZkZmFjZTQ2YTk4OWQwYjRiMjRjZDQyOGRl">

|

||||||

|

<br>The narrative has been stolen; if detected on Amazon, report...<br>

|

||||||

|

</span>

|

||||||

|

|

||||||

|

```

|

||||||

|

|

||||||

|

Since FreshRSS never saw the `<head>` or the `<style>` tag, it has no idea it's supposed to hide the spam `<span>`. It just displays all the text it found, resulting in this output in your feed reader:

|

||||||

|

|

||||||

|

Nathan got the message...

|

||||||

|

|

||||||

|

Sarya waved her hand...

|

||||||

|

|

||||||

|

The narrative has been stolen; if detected on Amazon, report...

|

||||||

|

|

||||||

|

This is the core of the issue: the content is hidden by a CSS rule that FreshRSS isn't loading, and the class names are random, so you can't just block the class.

|

||||||

|

|

||||||

|

### The Fix: CSS Selectors

|

||||||

|

|

||||||

|

You need to tell FreshRSS how to remove the unwanted elements based on their structure, not their random class names.

|

||||||

|

|

||||||

|

Go to: **Advanced** -> **CSS selector of the elements to remove**.

|

||||||

|

Paste this in the box:

|

||||||

|

|

||||||

|

```css

|

||||||

|

.chapter-content > span

|

||||||

|

```

|

||||||

|

|

||||||

|

|

||||||

|

This selector targets any `<span>` element that is a direct child (using `>`) of `.chapter-content`.

|

||||||

|

|

||||||

|

The spam text `<span class="cjZhY...">...</span>` matches this rule.

|

||||||

|

|

||||||

|

The actual story text `<span style="font-weight: 400">...</span>` is safe because it's a "grandchild" (it's inside a `<p>` tag), not a direct child.

|

||||||

|

|

@ -1,30 +1,28 @@

|

||||||

---

|

---

|

||||||

title: "RSS - Still Alive"

|

title: "RSS - Still Alive"

|

||||||

date: "2024-10-05"

|

date: "2024-10-05"

|

||||||

tags:

|

tags:

|

||||||

- rss

|

- rss

|

||||||

- selfhosted

|

categories:

|

||||||

categories:

|

|

||||||

- selfhosted

|

- selfhosted

|

||||||

---

|

---

|

||||||

|

|

||||||

# RSS - Still Very Useful

|

# RSS - Still Very Useful

|

||||||

|

|

||||||

I like having a centralized curated list of content. I'd rather go to a single page to catch up on new content instead of visiting or remembering to visit a bunch of different sites. I also don't like having to deal with cookies and sites tracking my every move.

|

I like having a centralized curated list of content. I'd rather go to a single page to catch up on new content instead of visiting or remembering to visit a bunch of different sites. I also don't like having to deal with cookies and sites tracking my every move.

|

||||||

|

|

||||||

Intro: [FreshRSS](https://freshrss.org/index.html) and [RSSHub](https://docs.rsshub.app/) with special guest [RSSHub-Radar](https://github.com/DIYgod/RSSHub-Radar)

|

I use: [FreshRSS](https://freshrss.org/index.html), [RSSHub](https://docs.rsshub.app/), and [RSSHub-Radar](https://github.com/DIYgod/RSSHub-Radar)

|

||||||

|

|

||||||

I used to only use RSS for blogs and other text based content but with the above tools I can RSS-ify most anything.

|

I used to only use RSS for blogs and other text based content but with the above tools I can RSS-ify most anything.

|

||||||

|

|

||||||

|

## The Flow of Content

|

||||||

|

|

||||||

## The Flow of Content ##

|

**FreshRSS** is the rss aggregator and can be used as the reader either on desktop or as a PWA on mobile. I host my own at [feeds.fig.systems](https://feeds.fig.systems). It's got a few slick themes and has options to [scrape webpages with x-paths](https://danq.me/2022/09/27/freshrss-xpath/). You provide a URL and use elements to select what you'd like to create your feed from, that's a lot of work per feed.

|

||||||

|

|

||||||

**FreshRSS** is the rss aggregator and can be used as the reader either on desktop or as a PWA on mobile. I host my own at [feeds.fig.systems](https://feeds.fig.systems). It's got a few slick themes and has options to [scrape webpages with x-paths](https://danq.me/2022/09/27/freshrss-xpath/). You provide a URL and use elements to select what you'd like to create your feed from, that's a lot of work per feed.

|

That was my first go at RSS-ifying everything until I learned about **RSSHub** which does the same thing but handles it automatically. I host my own instance at RSSHub.fig.systems. They provide pre-made "routes" which make turning many common content sources into feeds.

|

||||||

|

|

||||||

That was my first go at RSS-ifying everything until I learned about **RSSHub** which does the same thing but completely for you. I have my own hosted RSSHub.fig.systems. They provide pre-made "routes" which make turning many common content sources into feeds.

|

For example I could add the feed `rsshub.fig.systems/youtube/user/linustechtips/` to feeds.fig.systems and I'd get a new entry every time that channel uploads a new video.

|

||||||

|

|

||||||

For example I could add the feed ```rsshub.fig.systems/youtube/user/linustechtips/``` to feeds.fig.systems and I'd get a new entry every time that channel had a new feed.

|

Having to look up the routes can be annoying. Enter **RSSHub-Radar**, a nice browser extension that can automatically detect and provide the route for a given page you'd like to rss-ify. The extension can also be configured to format the url for whatever rss aggregator you use, FreshRSS or otherwise.

|

||||||

|

|

||||||

Having to look up the routes can be annoying. Enter **RSSHub-Radar**, a nice browser extension that can automatically detect and provide the route for a given page you'd like to rss-ify. The extension can also be configured to format the url for whatever rss aggregator you use, FreshRSS or otherwise.

|

It's worth going over [RSSHub's routes](https://docs.rsshub.app/routes/popular) to get an idea of what can be turned into an rss feed.

|

||||||

|

|

||||||

It's worth going over [RSSHub's routes](https://docs.rsshub.app/routes/popular) to get an idea of what can be turned into an rss feed.

|

|

||||||

408

content/docs/posts/security-groups.md

Normal file

408

content/docs/posts/security-groups.md

Normal file

|

|

@ -0,0 +1,408 @@

|

||||||

|

---

|

||||||

|

title: "Formatting AWS Security Groups for a VMware Migration"

|

||||||

|

date: "2025-02-05"

|

||||||

|

tags:

|

||||||

|

- terraform

|

||||||

|

- aws

|

||||||

|

- migration

|

||||||

|

categories:

|

||||||

|

- selfhosted

|

||||||

|

---

|

||||||

|

|

||||||

|

# The Problem

|

||||||

|

|

||||||

|

At work we're in the middle of a large lift and shift migration from VMware to AWS (for the same reason everyone is). Hundreds of servers across multiple departments, moved in waves.

|

||||||

|

|

||||||

|

The firewall rules for these servers come from everywhere. Palo Alto firewalls, host-based firewalls, department-specific switches, department-specific IT teams, random appliances that predate much of the current staff. Years of accumulated rules from multiple sources, and now they all need to become AWS security groups.

|

||||||

|

|

||||||

|

I needed to figure out how to format these rules in Terraform so that:

|

||||||

|

1. Coworkers completely new to IaC could read them

|

||||||

|

2. I could maintain them without losing my mind as rule counts climbed

|

||||||

|

3. PRs were reviewable

|

||||||

|

|

||||||

|

This is how the format evolved over three iterations.

|

||||||

|

|

||||||

|

# Iteration 1: Inline Rules

|

||||||

|

|

||||||

|

The most straightforward way to write a security group. Everything in one block.

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

resource "aws_security_group" "web_server" {

|

||||||

|

name = "web-server"

|

||||||

|

description = "SG for web-server"

|

||||||

|

vpc_id = var.vpc_id

|

||||||

|

|

||||||

|

ingress {

|

||||||

|

description = "HTTPS from campus"

|

||||||

|

from_port = 443

|

||||||

|

to_port = 443

|

||||||

|

protocol = "tcp"

|

||||||

|

cidr_blocks = ["10.0.0.0/24"]

|

||||||

|

}

|

||||||

|

|

||||||

|

ingress {

|

||||||

|

description = "SSH from admin subnet"

|

||||||

|

from_port = 22

|

||||||

|

to_port = 22

|

||||||

|

protocol = "tcp"

|

||||||

|

cidr_blocks = ["10.100.0.0/24"]

|

||||||

|

}

|

||||||

|

|

||||||

|

egress {

|

||||||

|

description = "Allow all outbound"

|

||||||

|

from_port = 0

|

||||||

|

to_port = 0

|

||||||

|

protocol = "-1"

|

||||||

|

cidr_blocks = ["0.0.0.0/0"]

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

This works fine for a server with 3-4 rules and is the first example you usually come across if you search for "ec2 firewalls". It's easy to read and easy to explain to someone who's never seen Terraform before.

|

||||||

|

|

||||||

|

The problem is that any change to any inline rule forces Terraform to evaluate the entire security group. Add a CIDR to one ingress block and the plan output gets noisy. It also doesn't play well with `for_each` if you want to loop over CIDRs for a single port.

|

||||||

|

|

||||||

|

# Iteration 2: Separate Rule Resources

|

||||||

|

|

||||||

|

Breaking the rules out into their own resources using `aws_vpc_security_group_ingress_rule` and `aws_vpc_security_group_egress_rule`.

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

resource "aws_security_group" "web_server" {

|

||||||

|

description = "SG for web-server"

|

||||||

|

vpc_id = var.vpc_id

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "web-server"

|

||||||

|

Source = "Palo Alto Firewall"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# Egress

|

||||||

|

resource "aws_vpc_security_group_egress_rule" "web_server_allow_all_outbound" {

|

||||||

|

security_group_id = aws_security_group.web_server.id

|

||||||

|

ip_protocol = "-1"

|

||||||

|

cidr_ipv4 = "0.0.0.0/0"

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "allow-all-outbound"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# HTTPS from campus

|

||||||

|

resource "aws_vpc_security_group_ingress_rule" "web_server_https_443" {

|

||||||

|

for_each = var.https_443_cidrs

|

||||||

|

security_group_id = aws_security_group.web_server.id

|

||||||

|

cidr_ipv4 = each.key

|

||||||

|

description = each.value

|

||||||

|

ip_protocol = "tcp"

|

||||||

|

from_port = 443

|

||||||

|

to_port = 443

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "HTTPS-443-${replace(each.key, "/", "-")}"

|

||||||

|

Rule = "tcp-443"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# SSH from admin subnet

|

||||||

|

resource "aws_vpc_security_group_ingress_rule" "web_server_ssh_22" {

|

||||||

|

for_each = var.ssh_22_cidrs

|

||||||

|

security_group_id = aws_security_group.web_server.id

|

||||||

|

cidr_ipv4 = each.key

|

||||||

|

description = each.value

|

||||||

|

ip_protocol = "tcp"

|

||||||

|

from_port = 22

|

||||||

|

to_port = 22

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "SSH-22-${replace(each.key, "/", "-")}"

|

||||||

|

Rule = "tcp-22"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

With variables like:

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

variable "https_443_cidrs" {

|

||||||

|

type = map(string)

|

||||||

|

default = {

|

||||||

|

"10.0.0.0/24" = "Campus network"

|

||||||

|

"10.100.0.0/24" = "Admin subnet"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

variable "ssh_22_cidrs" {

|

||||||

|

type = map(string)

|

||||||

|

default = {

|

||||||

|

"10.100.0.0/24" = "Admin subnet"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

This is better. Each rule is its own resource so Terraform plans are cleaner. Adding a CIDR to a port only shows that one rule changing. The `for_each` on a map of CIDR-to-description means you can see at a glance what each IP range is for.

|

||||||

|

|

||||||

|

I used this format for the 2nd wave. It worked. But by the next few waves we were moving more servers per wave and each server had its own set of variables. The variable files were getting long and hard to cross-reference with the rules.

|

||||||

|

|

||||||

|

Everything was also moved into a `$WORKSPACE/modules/security-groups/` directory to keep it organized. One file per server's rules, one file per server's variables.

|

||||||

|

|

||||||

|

# Iteration 3: Locals with Structured Data

|

||||||

|

|

||||||

|

By the time we were moving double digit servers per wave, the variable-per-port approach was getting hard to maintain. Too many variable files, too much scrolling back and forth to understand what a server's rules actually looked like.

|

||||||

|

|

||||||

|

I switched to using `locals` with a structured list. All the rules for a server live in one block. Each entry defines the port, protocol, and every CIDR that needs access on that port.

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

locals {

|

||||||

|

web_server_ports = [

|

||||||

|

# HTTPS

|

||||||

|

{

|

||||||

|

protocol = "tcp"

|

||||||

|

from = 443

|

||||||

|

to = 443

|

||||||

|

name = "https-443"

|

||||||

|

cidrs = {

|

||||||

|

"10.0.0.0/24" = "Campus network"

|

||||||

|

"10.100.0.0/24" = "Admin subnet"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

# SSH

|

||||||

|

{

|

||||||

|

protocol = "tcp"

|

||||||

|

from = 22

|

||||||

|

to = 22

|

||||||

|

name = "ssh-22"

|

||||||

|

cidrs = {

|

||||||

|

"10.100.0.0/24" = "Admin subnet"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

# RDP

|

||||||

|

{

|

||||||

|

protocol = "tcp"

|

||||||

|

from = 3389

|

||||||

|

to = 3389

|

||||||

|

name = "rdp-3389"

|

||||||

|

cidrs = {

|

||||||

|

"10.100.0.0/24" = "Admin subnet"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

# HTTP

|

||||||

|

{

|

||||||

|

protocol = "tcp"

|

||||||

|

from = 80

|

||||||

|

to = 80

|

||||||

|

name = "http-80"

|

||||||

|

cidrs = {

|

||||||

|

"10.0.0.0/24" = "Campus network"

|

||||||

|

}

|

||||||

|

},

|

||||||

|

]

|

||||||

|

|

||||||

|

# Flatten into individual rules

|

||||||

|

web_server_rules = flatten([

|

||||||

|

for port_config in local.web_server_ports : [

|

||||||

|

for cidr, description in port_config.cidrs : {

|

||||||

|

key = "${port_config.name}-${replace(cidr, "/", "-")}"

|

||||||

|

protocol = port_config.protocol

|

||||||

|

from_port = port_config.from

|

||||||

|

to_port = port_config.to

|

||||||

|

cidr = cidr

|

||||||

|

description = description

|

||||||

|

rule_name = port_config.name

|

||||||

|

}

|

||||||

|

]

|

||||||

|

])

|

||||||

|

|

||||||

|

# How many rules total

|

||||||

|

web_server_total_rule_count = length(local.web_server_rules)

|

||||||

|

|

||||||

|

# How many SGs needed (AWS has a rules-per-SG limit)

|

||||||

|

web_server_sg_count = max(1, ceil(local.web_server_total_rule_count / var.max_rules_per_sg))

|

||||||

|

|

||||||

|

# Chunk rules across SGs

|

||||||

|

web_server_rules_chunked = {

|

||||||

|

for sg_index in range(local.web_server_sg_count) : sg_index => [

|

||||||

|

for rule_index in range(

|

||||||

|

sg_index * var.max_rules_per_sg,

|

||||||

|

min((sg_index + 1) * var.max_rules_per_sg, local.web_server_total_rule_count)

|

||||||

|

) : local.web_server_rules[rule_index]

|

||||||

|

]

|

||||||

|

}

|

||||||

|

}

|

||||||

|

```

|

||||||

|

|

||||||

|

The security group itself handles overflow automatically. If a server has more rules than AWS allows per SG, it creates additional SGs and distributes the rules across them. Neither I nor anyone in my team had to count rules to make sure they were split across security groups evenly. It all gets generated dynamically.

|

||||||

|

|

||||||

|

```hcl

|

||||||

|

# Primary SG

|

||||||

|

resource "aws_security_group" "web_server" {

|

||||||

|

name = "web-server"

|

||||||

|

description = "SG for web-server"

|

||||||

|

vpc_id = var.vpc_id

|

||||||

|

|

||||||

|

lifecycle {

|

||||||

|

create_before_destroy = true

|

||||||

|

}

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "web-server"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# Overflow SGs (created only if needed)

|

||||||

|

resource "aws_security_group" "web_server_overflow" {

|

||||||

|

for_each = { for idx in range(1, local.web_server_sg_count) : idx => idx }

|

||||||

|

|

||||||

|

name = "web-server-overflow-${each.value}"

|

||||||

|

description = "SG for web-server (Overflow ${each.value})"

|

||||||

|

vpc_id = var.vpc_id

|

||||||

|

|

||||||

|

lifecycle {

|

||||||

|

create_before_destroy = true

|

||||||

|

}

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "web-server-overflow-${each.value}"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# Egress (primary SG only)

|

||||||

|

resource "aws_vpc_security_group_egress_rule" "web_server_allow_all_outbound" {

|

||||||

|

security_group_id = aws_security_group.web_server.id

|

||||||

|

ip_protocol = "-1"

|

||||||

|

cidr_ipv4 = "0.0.0.0/0"

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = "allow-all-outbound"

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# Ingress for primary SG

|

||||||

|

resource "aws_vpc_security_group_ingress_rule" "web_server_ingress" {

|

||||||

|

for_each = {

|

||||||

|

for rule in local.web_server_rules_chunked[0] :

|

||||||

|

rule.key => rule

|

||||||

|

}

|

||||||

|

|

||||||

|

security_group_id = aws_security_group.web_server.id

|

||||||

|

cidr_ipv4 = each.value.cidr

|

||||||

|

description = each.value.description

|

||||||

|

ip_protocol = each.value.protocol

|

||||||

|

from_port = each.value.protocol == "-1" ? null : each.value.from_port

|

||||||

|

to_port = each.value.protocol == "-1" ? null : each.value.to_port

|

||||||

|

|

||||||

|

tags = {

|

||||||

|

Name = each.value.key

|

||||||

|

Rule = each.value.rule_name

|

||||||

|

}

|

||||||

|

}

|

||||||

|

|

||||||

|

# Ingress for overflow SGs

|

||||||

|

resource "aws_vpc_security_group_ingress_rule" "web_server_overflow_ingress" {

|

||||||

|

for_each = merge([

|

||||||

|

for sg_index, sg in aws_security_group.web_server_overflow : {

|

||||||

|

for rule in local.web_server_rules_chunked[sg_index] :

|

||||||

|

"${sg_index}-${rule.key}" => {

|

||||||

|

sg_id = sg.id

|

||||||

|

cidr = rule.cidr

|

||||||

|

description = rule.description

|

||||||

|

protocol = rule.protocol

|

||||||

|

from_port = rule.from_port

|

||||||

|

to_port = rule.to_port

|

||||||

|

key = rule.key

|

||||||

|

rule_name = rule.rule_name

|

||||||

|

}

|

||||||

|

}

|

||||||

|

]...)

|

||||||

|

|

||||||

|